The Google Interactive Canvas control could be the silver bullet that unlocks the commercial potential of smart displays, beginning the revolution of how we use the internet through natural language.

One of the real restrictions I have found with the Google Assistant has been the lack of visual controls, previously restricted to images, lists, carousels and buttons. The Interactive Canvas control unlocks the full power of HTML, CSS and Javascript to the Google Assistant.

From a technical perspective, the Interactive Canvas Control (ICC) is essentially a voice enabled IFrame, with a few restrictions - developers have no access to the camera or mic, for now!

Google Assistant Interactive Canvas Demo Video

Google offer their own basic example / walkthrough of how to code an interactive canvas control, but I found it a little too detailed to get my head around and understand what was going on - it feels a little clouded by the game it produces vs the process. Also, their example code is a mixture of NODEJS and Javascript - I was looking for an easy example in C# .net and Javascript.

Its early days for the ICC, and as such I could not find any examples out there so decided to write my own. You can download my basic example of how to create a Google Assistant Interactive Canvas Control using c# .net from Github.

How Does the Google Interactive Canvas Control Work?

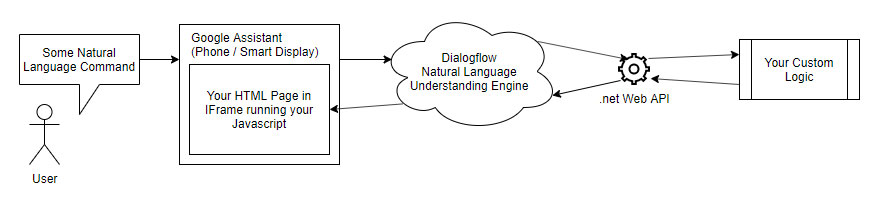

The best way to describe how the new voice enabled Google Assistant control works is through a diagram:

So, what is going on in the diagram above?

- User speaks a command to the Interactive Canvas control via a smart display or phone.

- User intent is determined by Dialogflow and passed on to your C# .net Web API for an answer.

- Web API produces an HTML response based on your custom logic.

- Google pass the data within your HTML response into your IFrame html page.

- Your own custom Javascript within the web page responds to the data passed to it by updating the visual display.

- User sees the response via your HTML page.

Thats about it! My code example of the google assistant interactive canvas on Github is deliberately simple, and I have fully documented all aspects so you can have a greater understanding of what is going on.

For a more 'commercialised' example of this technology, and how I think business can use it to start to monitise this medium have a read of how I think the google assistant can be used for ecommerce on smart displays such as the new google home nest hub max.

Leave Your Comments...